The widespread adoption of artificial intelligence is transforming the way we work, how we do business, and how we live our daily lives. It’s no wonder that its proliferation is raising deep concerns about trusting this technology.

A KPMG global study on trust in AI revealed that 61% of people are wary about trusting AI systems while 67% report low to moderate acceptance of AI. From cyberattacks and fake news to medical misdiagnosis, the risks of AI loom large alongside the wealth of opportunities that it brings. With these concerns in mind and with AI likely to dominate the global discourse for the foreseeable future, it’s important to develop frameworks and practices that ensure the responsible and ethical use of AI technologies.

The terms "responsible AI" and "ethical AI" are often used interchangeably, leading to confusion regarding their distinctions and implementations. In this article, I’ll discuss the nuances of responsible AI versus ethical AI and explore a comprehensive framework for implementing responsible AI practices. Finally, I’ll shed light on how responsible AI practices are enabled and can work effectively with MACH architecture.

Breaking Down “Responsible AI” vs. “Ethical AI”

Generally speaking, ethical AI relates to the values and principles guiding AI development and usage, with considerations for cultural and societal norms. It encompasses broader philosophical and moral considerations that may vary across different cultures and contexts.

On the other hand, responsible AI focuses on the practical and tactical aspects of how AI technologies are developed, deployed, and managed. While ethical AI addresses overarching questions of right and wrong, responsible AI delves into the specific strategies and actions needed to mitigate risks and ensure accountability. Essentially, responsible AI is all about ensuring that AI systems are used in a way that minimizes harm and maximizes benefits.

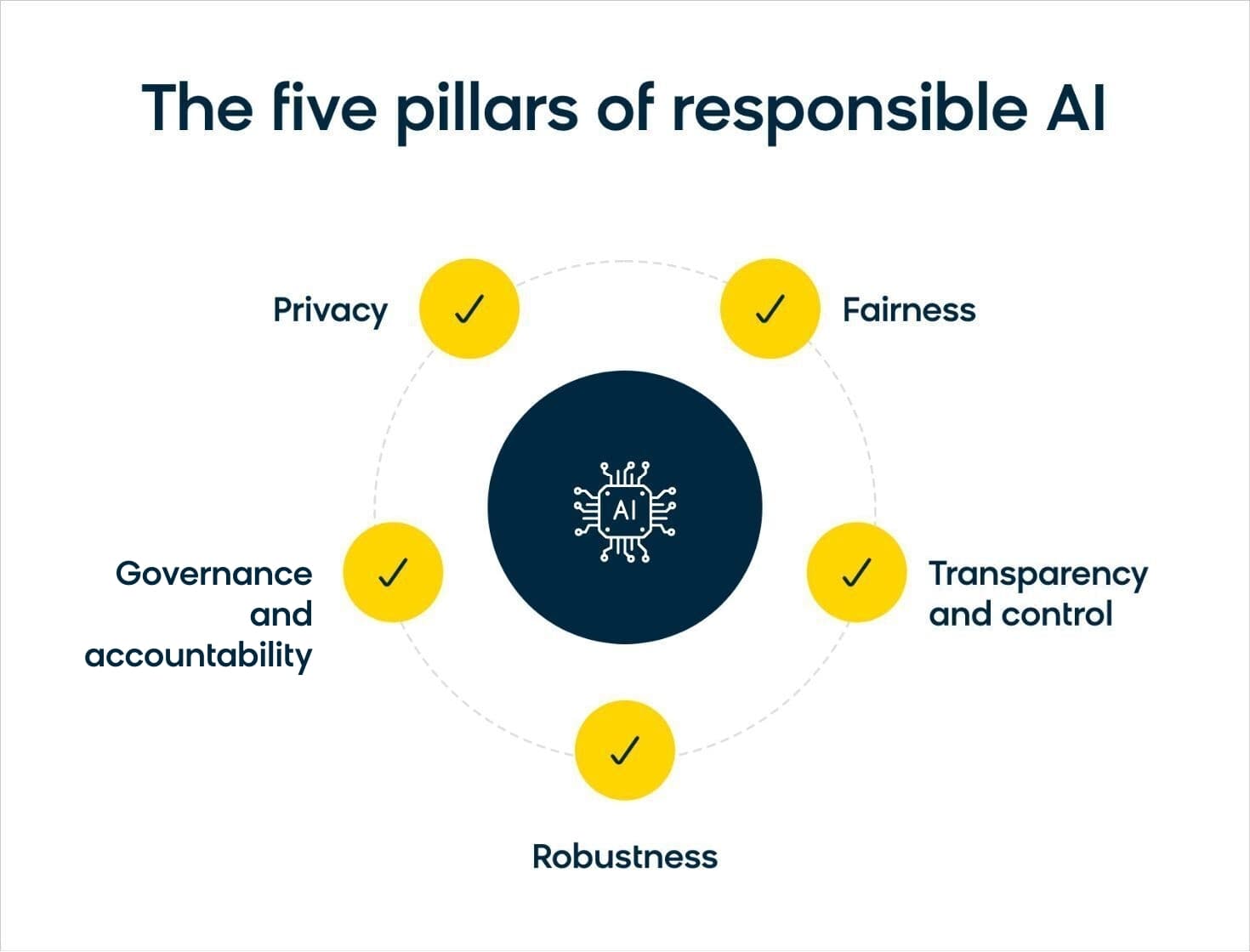

The five pillars of responsible AI are:

- Privacy

- Fairness

- Transparency and control

- Robustness

- Governance and accountability

Let’s explore each of these components in more detail and how they comprise the development of a responsible AI framework.

An Overview of a Responsible AI Framework

Responsible AI frameworks are crucial for making sure that AI technologies are developed, deployed, and used in an accountable manner. In particular, they should address the following areas:

Privacy

Privacy is a fundamental aspect of responsible AI, especially in today's data-driven world. To safeguard user privacy, privacy-enhancing technologies (PETs) play a crucial role. These technologies, widely used in the ecommerce space, help minimize the amount of data processed to protect personal information.

They include techniques such as:

- Federated learning: This ensures that sensitive data remains on the user's device during model training.

- Differentiated privacy: This works by adding random noise to a dataset before it's analyzed. This noise masks the contribution of any individual data point to the overall dataset, making it difficult to discern any specific individual's information.

- Secure multi-party computation (SMPC): This is where data is encrypted end to end, allowing organizations to work together while limiting the information that either party can learn.

In this way, PETs add layers of protection to user data, preventing unauthorized access and maintaining anonymity.

Fairness

Ensuring fairness in AI systems is essential to prevent bias and discrimination. Fairness involves analyzing demographic splits in datasets and training models to be unbiased across different groups. Techniques like synthetic data generation and careful data collection help mitigate biases in training data, ensuring equitable outcomes for all users.

Transparency and Control

Transparency and control empower users to understand and influence how AI systems interact with them. Model documentation, such as model cards and system cards, provides insights into how models operate and their data sources. Giving users control over personalized experiences, coupled with features like ad preferences and explanation prompts, fosters trust and accountability in AI systems.

Robustness

Robustness encompasses the resilience of AI systems against adversarial attacks and unintended behaviors. While still in the early stages of development, techniques for ensuring robustness include model versioning, anomaly detection, and continuous monitoring. These measures help detect and mitigate vulnerabilities, ensuring the reliability and security of AI applications.

Governance and Accountability

Effective governance and accountability mechanisms are essential for overseeing AI systems throughout their lifecycle. Establishing model registries, tracking model usage, and implementing clear ownership and accountability structures are vital steps. Additionally, defining processes for model maintenance, updates, and compliance ensures that AI systems remain aligned with ethical and regulatory standards.

A comprehensive responsible AI framework encompasses all of these aspects. By implementing such frameworks, organizations can build trust with users, mitigate risks, and maximize the benefits of AI technologies for all stakeholders involved.

Applying a MACH Approach to Responsible AI

MACH (microservices, API-first, cloud-native, headless) architecture provides a robust framework that enables organizations to implement responsible AI practices in several key ways:

- Microservices: MACH architecture promotes a modular approach to software development, allowing AI functionalities to be implemented as microservices. Each microservice can be individually monitored, updated, and audited, facilitating responsible AI governance.

- API-first approach: With an API-first approach, AI functionalities can be exposed through well-defined APIs, enabling easy integration with other systems and applications. This facilitates interoperability and data exchange, which are essential for implementing ethical AI practices such as fairness, transparency, and privacy. By providing standardized interfaces, MACH architecture ensures that AI systems can be easily scrutinized and audited for compliance with regulatory standards and ethical guidelines.

- Cloud-native infrastructure: MACH architecture leverages cloud-native infrastructure, enabling businesses to deploy AI applications in scalable, flexible, and cost-effective environments. Cloud platforms offer built-in security features, data encryption, and compliance certifications, which are crucial for ensuring the privacy and security of sensitive information used by AI systems. Additionally, cloud-native architectures provide robust monitoring and logging capabilities, allowing businesses to track and analyze AI performance metrics and detect any anomalies or biases in real time.

- Headless ecommerce platforms: Headless ecommerce platforms decouple the front-end presentation layer from the back-end functionality, enabling businesses to deliver personalized and adaptive user experiences. This flexibility is particularly important for implementing responsible AI practices such as user consent management, data anonymization, and algorithmic transparency. By separating content delivery from business logic, headless architectures empower businesses to customize AI-driven interactions based on user preferences and consent settings, while ensuring compliance with data protection regulations such as the GDPR and CCPA.

MACH architecture provides a solid foundation for implementing responsible AI practices by promoting modularity, interoperability, security, and transparency. The inherent advantages of MACH architecture mean that businesses can build AI systems that are aligned with regulatory requirements and meet societal expectations. For your business to effectively adopt responsible AI, you’ll want to look for solutions in the MACH Alliance, which brings together best-of-breed technologies in ecommerce.

However, one key consideration you’ll have to make when putting together your MACH-compliant tech stack is data. If you’re not careful or strategic with your approach, you’ll wind up with AI tools that aren’t properly communicating with each other. This is why it’s so crucial to properly unify your data. If you’re able to process all your data in real time, you’ll be able to seamlessly apply all your responsible AI processes throughout your entire tech stack.

Moving Forward With Responsible AI

By focusing on a responsible AI framework, organizations can mitigate risks and maximize the positive impact of AI technologies. As AI continues to evolve, ongoing research, collaboration, and innovation are essential for advancing responsible AI practices and fostering trust in AI-driven systems.

Responsible AI entails a multifaceted approach encompassing privacy, fairness, transparency, robustness, and governance. By embracing these principles and integrating them into AI development and deployment processes, organizations can build trustworthy and ethically aligned AI systems that benefit business and society as a whole. As we navigate the complexities of AI, a commitment to responsible AI practices is key to shaping a more inclusive, equitable, and sustainable future.